A colleague and friend of mine wrote a cautionary comment to last month’s post on glyphosate sharing allegations about one of the authors, Stephanie Seneff (2013). Critics point out that Seneff is not a biologist, but a computer scientist, and a known anti-GMO advocate. There are several issues I would like to raise in relation to this oft-quoted objection: activist scientists, industry bullying of scientists, establishing causation, and Type I vs. Type II errors. In subsequent posts, I will address these issues in reverse order; they are fundamental cruxes in the use of science for the common good that are imperative to examine at this turning point in global society.

Several thoughtful, recent articles and books on the thinking about hypotheses and Type I vs. Type II errors have led me to examine various analogies used to assess how decisions about uncertainty and action based on science are made.

Type I errors are those in which an effect is detected where there is none, a false positive in other words. Type II errors describe those errors in which an effect is not detected where there is one, a false negative in common parlance.

A fascinating article in Scientific American finds that human psychology is responsible for some errors we tend to make in this regard because in some cases, making a Type I error is less potentially harmful, and therefore evolutionarily adaptive, than making a Type II error. The example used is this: a Type I error, seeing a pattern where there is no pattern – like the wind in grass – might cause an early human to see a predator where there is no predator. But that’s OK. There is no real cost to such a mistake. On the other hand, a Type II error, not seeing a pattern where there is one – a predator in the grass – can be much worse. A mistake like this can result in death for the individual, or even the whole family or tribe. When death is involved, it is better to err on the side of caution, obviously. The article goes on to talk about how this results in false assignations of “agenticity,” or the idea that there is some thinking force behind patterns in the world (Shirmer 2009). A two-by-two grid for this logical problem might look like this:

An article in the Industrial Psychiatry Journal makes the analogy between scientific hypotheses and decisions in law (Banerjee et al. 2009). A Type I error – or false positive – is comparable to an innocent jailed or even executed, which is rightly seen as the worst, least just outcome, hence our presumption of “innocent until proven guilty.” Of course, we have all seen that there are many of these worst-case scenarios in real life. In law, a Type II error – a false negative – or a guilty person who is released, is also potentially bad, particularly if that guilty person goes on to commit other crimes. Still, this outcome is seen as less horrific compared to an innocent person convicted.

Some of the best of the recent work on the logic of assessing causation and risk is What’s the Worst That Could Happen? by Greg Craven, an ingenious book about logically assessing the risks of climate change that began as a somewhat corny YouTube post (complete with Viking headdress), eventually garnering millions of views.

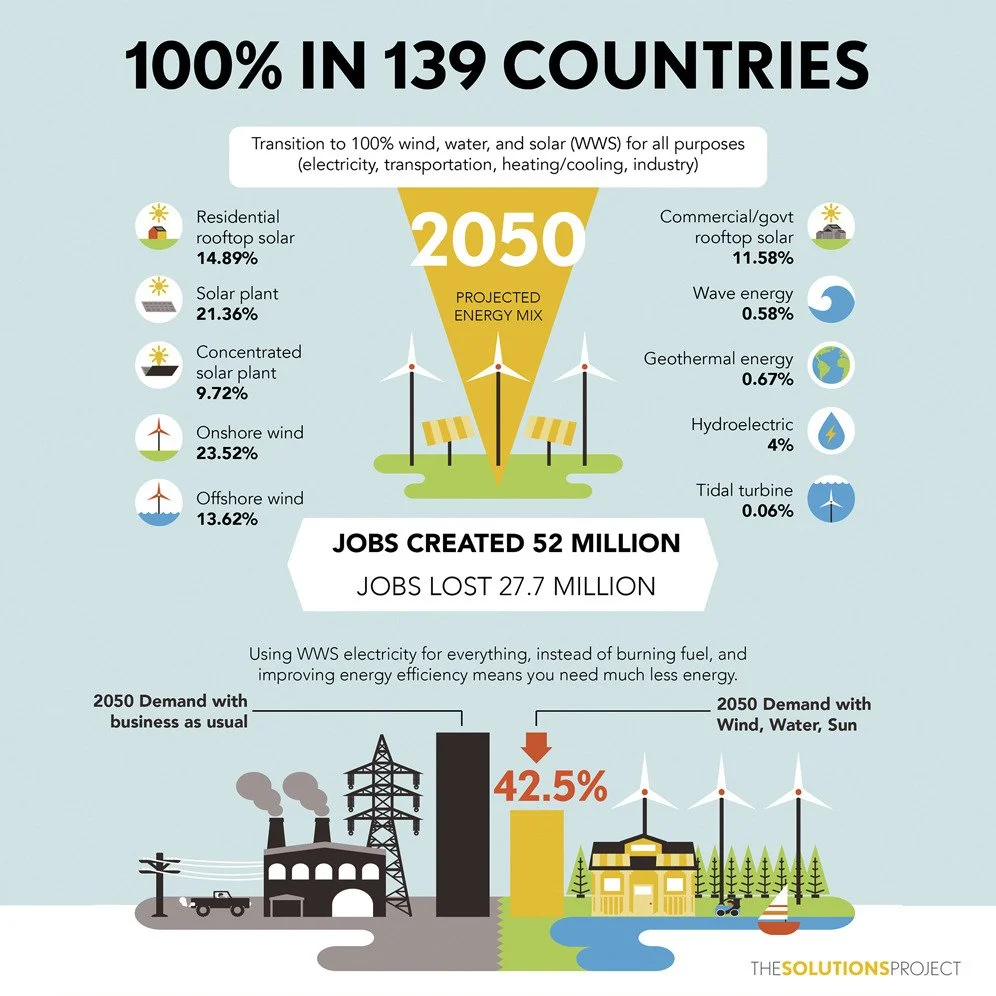

Here’s how he lays out the logic about the outcomes from Climate Change:

with a Type I error, there is no climate change, but we wrongly regulate. However, even in this outcome, regardless of overwhelming evidence to the contrary, even if we grant a global economic depression, there are still some benefits, despite the economic costs. It is easily verifiable that fewer people would die from the direct effects of coal-fired power plants, completely aside from the impacts of climate change. On the other hand, in the worst predictions of a Type II error in this scenario, we all die. At the least, we face dire social, political, environmental, and health consequences.

Greg Craven on YouTube – What’s the Worst That Can Happen?

When it comes to chemical regulation, I would argue that the stakes are nearly as high as with climate change, and likewise weighted against making a Type II error. In the case of a Type I error – a false positive – a chemical could be taken off the market that could have been helpful or less expensive than an alternative. While this is an ill, it is one against which we are very well defended. The Type II error in this case, the error we are making rampantly, as documented abundantly by the CDC Biomonitoring Project, the President’s Cancer Panel, and the American Academy of Pediatrics – people, including young children, fall ill and die. They lose IQs points, experience bad health, risk death, and generally experience all the evils they could experience: loss of life, liberty, and the pursuit of happiness.

In chemical regulation, the scientists I have interviewed themselves have said they try very, very hard to avoid Type I errors because chemical companies exert undue pressure on the process. These companies would sue and slander scientists who make this kind of error, in the same way that they have with climate change. A case in point: the persecution of Tyrone Hayes for his research on atrazine, beautifully documented by The New Yorker (Aviv 2014). The Union of Concerned Scientists is working to raise funds to protect scientists who are so persecuted. Even when the evidence is beyond a reasonable doubt, huge economic forces and astonishingly wealthy companies do everything in their power to call into doubt the regulation outcome. Because this two-by-two square involves death for humans, it is more analogous to not seeing a predator than to releasing a guilty person. In addition, unjust death is more likely in the justice analogy to be brought through the death penalty, in the case of a Type I error, than in a Type II error. In the predator and chemical regulation analogies, unjust death is more likely to result from a Type II error. The precautionary principle would indicate that much more caution should be applied in models where the Type II error is more calamitous. Science denial on behalf of the status quo, which avoids at all costs Type II errors, results in very bad consequences, both for individuals and for humanity. It results in ridiculous outcomes like the one we are currently experiencing with virtually no action on climate change, the greatest threat to human civilization ever, aside from the threat of all-out nuclear war.

The point of all this is that while the logical pattern of these problems is similar, the outcomes, the gain or loss in common and individual goods, are entirely unequal. And while one would like to think that the bias introduced to this pattern was entirely from a logical calculation of these goods and ills, this is very far from the case: first, because there is a huge bias toward the status quo; second, because very large entities (corporations) care about bottom lines far more than the common good, even if it entails others’ deaths; and third, because industry can exert pressure even on the process and thinking of the supposedly objective science and scientists themselves, towards what they consider the desired outcome. Not only does this outside pressure directly affect the behavior and science of those scientists in the employ of the industry, like the scientists doing bogus work for the tobacco industry. It also affects in more subtle but still serious ways those well-intentioned scientists who will be bullied if they are wrong in the direction industry does not want them to go. Also, in a case like preventing climate change, or giving up handy chemicals that help people, there is a bias on behalf of nearly all of us: we do not want to find out that we must be inconvenienced to avoid terrible hazards in the future. But we tend to completely neglect the consequences of Type II errors that support the status quo or business as usual. And we forget that science and scientists can be wrong in the wrong direction. The Arctic can melt faster, and the chemicals can cause more autism and lower IQs than anyone ever predicted.

Effect size is another important issue, one I cannot address adequately in an already long blog. But we must consider that while a wrong decision about a predator or an unjust decision in law affects only a few people, runaway global climate change will negatively impact millions, possibly billions, possibly every living being on the planet. Excess use of chlorpyrifos not only killed my daughter and many others like her; it has also affected the intelligence of many, if not most, children. Bruce Lanphear is excellent on the subject of the shifting bell curve of intelligence among today’s pre-polluted generation of children (PANNA 2011).

It should also be acknowledged that any such schema as these grossly oversimplify the complexities of real life. But these logical models are useful in epidemiology and assessment of risk precisely because they narrow the possibilities down to broad outlines.

Back to Samsel and Seneff: they are not proposing harms of glyphosate in isolation; rather they are beginning to theorize about mechanisms by which harms, already documented in the epidemiological data, could occur. Their theory is far from conclusive, but nevertheless suggestive. It goes to biological plausibility of an established hypothesis, that glyphosate, far from being innocuous, harms people. Glyphosate is obviously not the only pernicious chemical, but it one that has been relatively neglected. Biological plausibility is one of the Bradford Hill criteria for establishing causation in science. More on that soon. It is perhaps not surprising that ideas about biological plausibility based on indirect mechanisms come not from specialists in cancer or autism, or epidemiologists, or chemists, but from polymaths who step back and try to see the whole picture. Often, that is how break-through ideas occur. We need more of these kinds of insights and discussions about how technology and science can benefit, or conversely, harm, the things and people we value the most.

References

Aviv R. 2014. A valuable reputation. The New Yorker, Feburary 10. Available at http://www.newyorker.com/magazine/2014/02/10/a-valuable-reputation

Banerjee A, Chitnis UB, Jadhav SL, Bhawalkar JS, Chaudhury S. 2009. Hypothesis testing, type I and type II errors. Ind Pscyhiatry 18(2):127-131.

Craven G. 2007. The most terrifying video you will ever see. YouTube. Available at https://www.youtube.com/watch?v=zORv8wwiadQ

Craven G. 2007. What’s the worst that could happen? New York: Penguin Group, 2009.

Peeples L. ‘Little things matter’ exposes big threats to children’s brains. Huffington Post, November 20.

Pesticide Action Network of North America (PANNA). 2011. Little things matter: Shifting IQ down a notch. Available at http://www.panna.org/blog/little-things-matter-shifting-iqs-down-notch

Samsel A, Seneff S. 2013. Glyphosate’s suppression of Cytochrome P450 enzymes and amino acid biosynthesis by the gut microbiome: Pathways to modern diseases. Entropy 15: 1416-1463.

Shermer M. 2009. Why people believe invisible agents control the world. Scientific American, June 1. Available at http://www.scientificamerican.com/article/skeptic-agenticity/